Most marketers describe SEO as a moving target. Every few years, Google “changes the rules,” rankings shift, and everything that used to work suddenly stops working.

Google rolled out Panda. Then Penguin. Then Hummingbird. Later came RankBrain, BERT, the Helpful Content update, and most recently AI-driven search experiences. Each wave created anxiety and urgency across the industry.

It feels like a reset every time.

That story is wrong.

Google’s objective has not changed since the beginning. Its mission has always been to rank the most relevant, useful result for a query. What changed from 2010 to present is not the goal. It is Google’s ability to measure quality, intent, authority, and satisfaction with increasing precision.

The real story of SEO is not a sequence of dramatic pivots. It is a steady expansion in measurement capability. When you understand that pattern, you stop reacting to updates and start aligning with the trajectory.

For agencies like Get The Clicks, this distinction matters. As outlined in their strategic brand review, their positioning is rooted in data collection, reverse engineering, and proprietary AI monitoring rather than guesswork. That approach mirrors the broader evolution of search itself: better measurement produces better decisions.

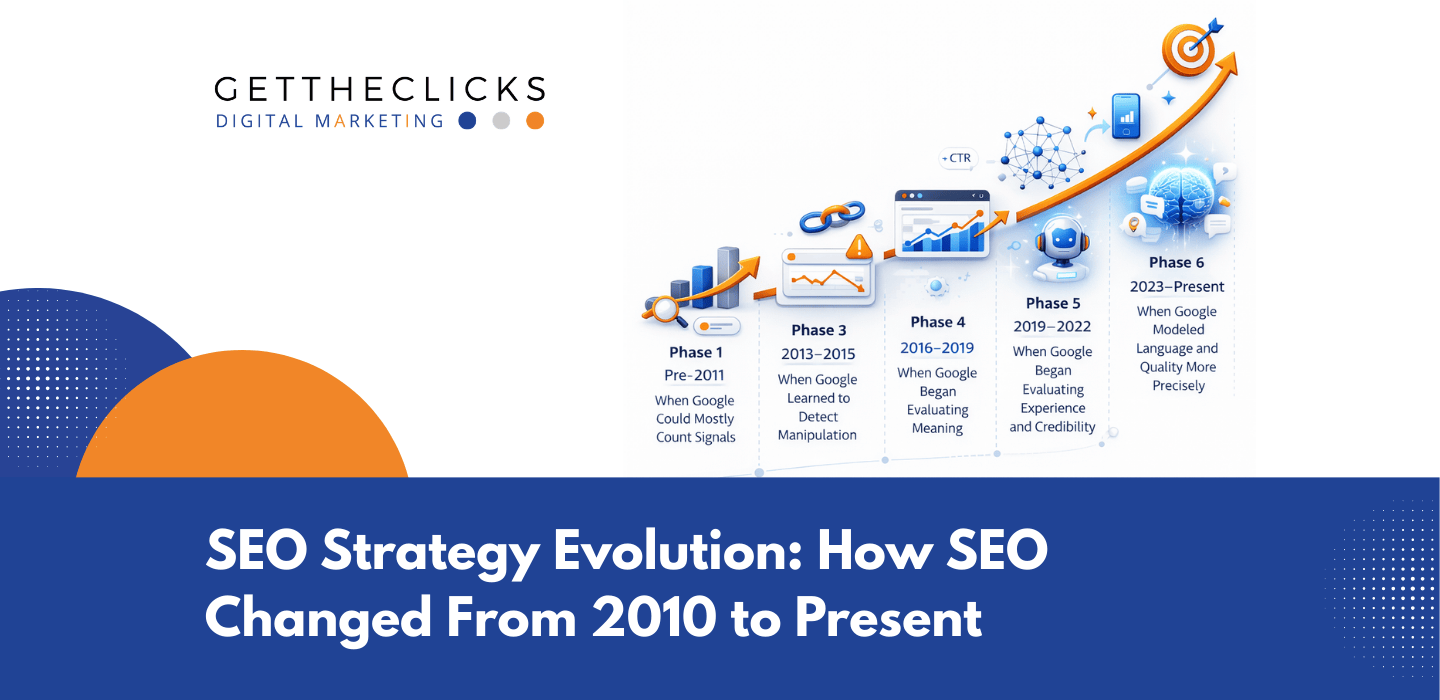

To understand how SEO evolved, we need to trace what Google learned to measure at each stage.

Phase 1 (Pre-2011): When Google Could Mostly Count Signals

In 2010, SEO worked because Google relied heavily on countable signals. The dominant levers were straightforward and highly mechanical:

- Keyword frequency

- Exact match anchor text

- Backlink quantity

- Exact match domains

Google’s early ranking systems were strong at string matching and link counting. If a page repeated a keyword frequently and received links using that same phrase, the system interpreted this as relevance and authority. Search was largely lexical, matching words to words.

Relevance still mattered, but shallow optimization often outperformed genuinely useful content because Google’s evaluation depth was limited. A 300-word article with aggressive keyword usage could outrank a comprehensive guide if the signals aligned properly.

This enabled what we might call signal amplification SEO. The dominant playbook looked like this:

- Produce multiple pages targeting slight keyword variations

- Acquire as many links as possible

- Optimize anchor text aggressively

That behavior was not irrational. It was rational within the measurement constraints of the algorithm. The weakness was not SEO itself. The weakness was limited quality evaluation.

That limitation would not last.

Phase 2 (2011–2012): When Google Learned to Detect Manipulation

Panda and Penguin marked a turning point. Google shifted from counting signals to classifying patterns.

Panda introduced large-scale quality detection. Instead of evaluating pages in isolation, Google began identifying statistical characteristics associated with low-value content, including:

- Thin pages with minimal substance

- Duplicate or heavily templated content

- High ad-to-content ratios

- Shallow, non-original information

Low word count was not inherently bad. Product pages and concise answers remained valid. The issue was utility relative to intent. Panda reframed the evaluation from surface quantity to substantive usefulness.

Penguin applied similar logic to link profiles. Rather than asking “How many links?”, Google began asking whether the link profile exhibited manipulation patterns, such as:

- Excessive exact match anchor ratios

- Sudden unnatural link spikes

- Networks of low-quality referring domains

Links continued to matter. Artificial links lost leverage.

This phase eliminated shortcuts without redefining relevance. Authority still mattered. It simply had to be earned in ways that were harder to fake.

Phase 3 (2013–2015): When Google Learned to Understand Meaning

With Hummingbird and RankBrain, Google moved beyond keyword matching toward semantic interpretation. The algorithm began interpreting entities, relationships, and contextual intent rather than isolated strings.

Hummingbird shifted search from matching phrases to understanding meaning. A query like “best place to fix a cracked iPhone screen near me” requires interpreting:

- Service type

- Device entity

- Local intent

- Proximity weighting

Exact keyword repetition became less critical than topical alignment.

RankBrain introduced machine learning to interpret unfamiliar or ambiguous queries. Instead of relying solely on pre-programmed rules, Google analyzed historical patterns to infer intent.

The unit of optimization shifted from keyword to topic aligned with intent. One comprehensive resource could rank for hundreds of variations because Google now understood conceptual relationships.

For a data-driven agency like Get The Clicks, this reinforces why reverse engineering intent matters more than chasing isolated phrases. When Google models meaning, strategy must model meaning.

Phase 4 (2016–2019): When Google Began Evaluating Experience and Credibility

As spam detection and semantic understanding matured, Google expanded its evaluation surface. Relevance alone was no longer sufficient if the experience was poor or the source lacked credibility.

Mobile-first indexing aligned search evaluation with actual user behavior. The mobile version of a website became the primary reference point for indexing and ranking. Structural consistency and usability became foundational requirements rather than enhancements.

Core Web Vitals introduced measurable experience thresholds focused on:

- Loading performance

- Visual stability

- Interactivity

These metrics function primarily as constraints. Extremely poor performance can suppress visibility. Adequate performance prevents suppression. Speed improvements rarely rescue irrelevant content, but they remove structural friction.

Simultaneously, E-A-T evolved into E-E-A-T, expanding trust evaluation. Google began inferring credibility through external references, brand signals, and reputational consistency.

Trust inference now draws from signals such as:

- Author presence and credentials

- Brand mentions and citations

- Industry affiliations and certifications

- Consistency across web properties

This is where brand positioning intersects with SEO. As described in the GTC strategic review, Get The Clicks emphasizes proprietary AI systems, Google certifications, structured reporting, and community involvement. These are not aesthetic add-ons. They reinforce credibility signals that modern search systems evaluate implicitly.

SEO has expanded beyond technical optimization. It now overlapped directly with brand authority.

Phase 5 (2019–2022): When Google Modeled Language and Quality More Precisely

BERT enhanced contextual language modeling. Instead of interpreting keywords independently, Google evaluated words relative to the surrounding context. Subtle phrasing differences became easier to interpret, particularly for conversational queries.

This reduced the need for awkward keyword targeting and increased the importance of comprehensive topic coverage.

The Helpful Content Update introduced site-level quality classification. Rather than analyzing pages individually, Google assessed overall publishing patterns and intent alignment.

The difference became visible between:

- High-volume, surface-level content designed primarily for search engines

- In-depth, problem-aligned resources designed for users

Two agencies may publish the same number of articles. One produces minor keyword variations. The other builds structured, insight-driven resources. Under stricter modeling, depth compounds while commoditized volume destabilizes.

For local service providers and legal professionals — the core audience identified in the GTC brand review — this distinction is critical. Trust-heavy industries cannot rely on thin publishing strategies. Authority must be demonstrated.

The shift was not anti-content. It was anti-commoditized content.

Phase 6 (2023–Present): When Google Began Generating Answers

The most recent shift is answer synthesis. AI Overviews and expanded featured snippets represent a structural evolution in how information is delivered.

Google can now extract, summarize, and combine information from multiple sources directly within the search interface. Visibility is no longer determined solely by ranking position. It increasingly depends on extractability and clarity.

Content now needs to be:

- Structurally clear

- Direct in answering questions

- Logically organized

- Supported by trust signals

Not every query triggers AI summaries. Transactional and navigational searches often remain traditional. However, informational queries increasingly produce hybrid experiences where impressions may not convert directly into clicks.

This does not eliminate SEO value. It shifts measurement toward visibility, citation presence, and assisted conversions. Agencies that prioritize structured data analysis and reporting, as emphasized in the Get The Clicks positioning, are better equipped to interpret these shifts.

What Never Changed From 2010 to Present

Despite fifteen years of evolution, four principles remained constant:

- Relevance

- Authority

- Accessibility

- User satisfaction

In 2010, these were approximated through surface signals. Today, they are evaluated through layered systems that include semantic modeling, pattern recognition, behavioral alignment, experience thresholds, trust inference, and answer synthesis.

Across every phase, the trajectory follows a clear progression:

- Count signals

- Detect manipulation

- Understand meaning

- Evaluate experience

- Model satisfaction

- Generate answers

The direction did not change. The measurement improved.

For businesses competing in markets like Orlando and Tampa, where Get The Clicks is focused on expansion, the lesson is not to chase updates. It is to align with what Google is progressively improving at measuring.

When a website is treated as a sales tool, when campaigns are backed by structured data, and when authority is built rather than simulated, SEO becomes durable instead of reactive.

The rules never changed.

The measurement did.

Conclusion: SEO Was Never Chaos. It Was Calibration.

SEO did not reset every few years.

It matured.

From 2010 to today, Google did not change its objective. It refined its ability to measure relevance, authority, experience, and satisfaction with increasing precision. Each update reduced noise. Each advancement narrowed the gap between manipulation and merit.

The businesses that struggled treated updates as shocks. The businesses that adapted recognized a pattern: better measurement rewards better fundamentals.

That is why modern SEO cannot rely on guesswork, volume tactics, or surface optimization. It requires structured data collection, reverse engineering of search behavior, and continuous refinement. It requires treating a website as a sales tool, not just a marketing asset. And it requires visibility into what is actually working.

At Get The Clicks, that philosophy is operational, not theoretical. Measurement comes first. Strategy follows data. Optimization is continuous. When marketing is built on deeper signal interpretation rather than reaction, results become more predictable.

If you are a local service business or legal professional navigating uncertainty around AI, search changes, and rising competition in markets like Orlando or Tampa, the question is not “What changed this time?”

The better question is not about the last update. It is about your alignment with the direction of measurement.

Are you aligned with where search is getting better at measuring?

If you want clarity grounded in real data rather than speculation, contact Get The Clicks. Let’s turn “Less Argghhh” into “More Ahhhhh.”